Online labor markets, such as Amazon's Mechanical Turk, are often used to crowdsource simple, short tasks like image labeling and transcription. However, expert workers, who tend to be busy putting their knowledge to work in traditional employment, are less likely to participate in microtask systems. It can be hard to find a sizeable number of them via online labor markets. This can make it difficult to use a Turk-like platform to complete complex jobs that require specific skillsets -- which means overall low wages and impact for crowdsourcing labor markets.

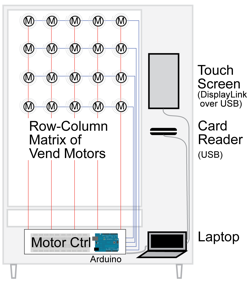

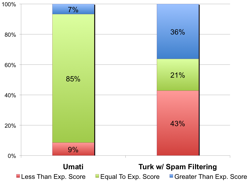

Communitysourcing helps solve this problem by bringing the crowdsourcing work to crowds of expert workers directly with kiosks that provide tasks and rewards situated within these expert's physical communities. As an example, we implemented Umati, the Communitysourcing Vending Machine, a vending kiosk with a touchscreen interface. We placed Umati within a specific community (e.g., students), provide work that fits their skill-set (e.g., grading intro-level exams), and reward them with items they find personally valuable (e.g., candy). We found that Umati was able to generate more consistent and accurate grades than those we could generate using Mechanical Turk, at less cost than paid expert graders.